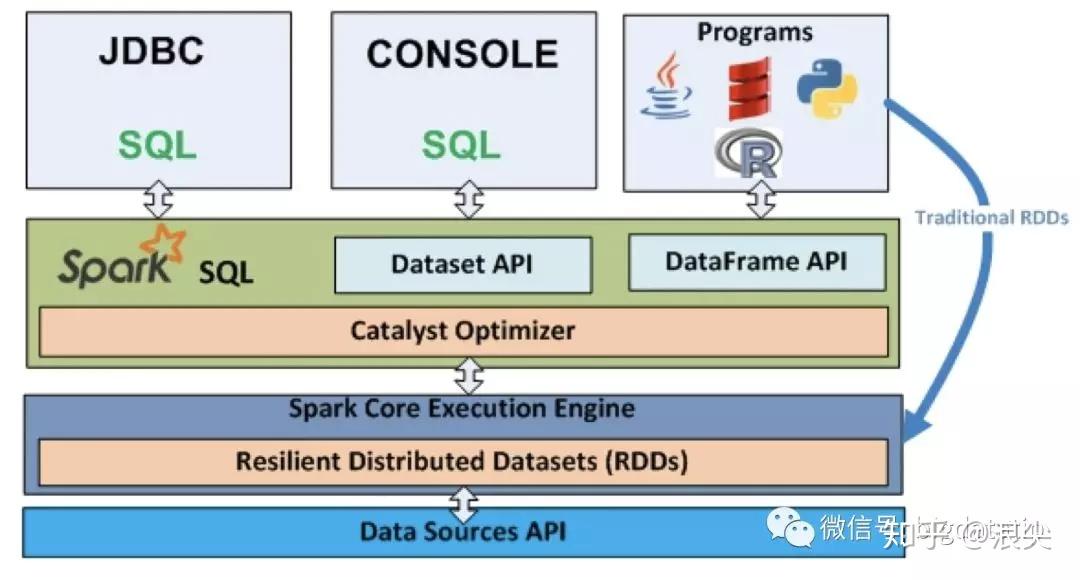

Spark SQL has various performance tuning options like memory settings, codegen, batch sizes and compression codes. It includes a cost based optimizer, columnar storage and code generation for faster execution of queries. Spark SQL performs much better than Hadoop because of in-memory computing. Result=context.sql ( “”” SELECT * FROM EMPLOYEE JOIN json ….”””) iii) Performance and Scalability Below is a spark SQL example that shows query and join on different data sources – Users can connect to any data source the same way through Schema-RDD’s and also join data across multiple data sources. Spark SQL supports allows users to read and write data in a variety of data formats including Hive, JSON, Parquet, ORC, Avro and JDBC. It has two API’s – SQL and Dataframe DSL (Domain Specific Language).Įxplore Categories Apache Hadoop Projects Apache Hive Projects Apache Hbase Projects Apache Pig Projects Hadoop HDFS Projects Apache Impala Projects Apache Flume Projects Apache Sqoop Projects Spark SQL Projects Spark GraphX Projects Spark Streaming Projects Spark MLlib Projects Apache Spark Projects PySpark Projects Apache Zepellin Projects Apache Kafka Projects Neo4j Projects Microsoft Azure Projects Google Cloud Projects GCP AWS Projects Users have to explicitly declare the schema in Hive. It does not have its own JDBC server but uses Hive Thrift Server. Has its own JDBC server – Hive Thrift Server.

Metastore is optional and can use Hive metastore. Metastore has to be created to run hive queries.

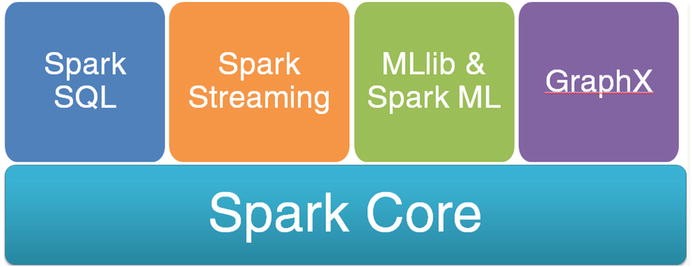

It is a library, so integrating it with other libraries in the spark ecosystem is easy.

Spark SQL does not require developers to create a new metastore as it can directly use the existing Hive metastore. Supports Real-Time Processing – Unlike Hadoop Hive that supports only batch processing (where historical data is stored and later used for processing), Spark SQL supports real-time querying of data by using the metastore services of Hive to query the data stored and managed by Hive.Without any hassle, developers can continue to write queries in HiveQL and during execution they will automatically get converted to Spark SQL. However, Spark SQL allows HiveQL queries to be executed directly in it without having to make any changes to the code, making migration from HiveQL to Spark SQL easier for organizations to achieve performance gains. Any organization using Hive since the last few years would face difficulties in writing all the Hive queries in Spark SQL to attain performance gains. No Migration Hurdles - Though both HiveQL and Spark SQL follow the SQL way of writing queries, the syntax for both is completely different. For example, if it takes 5 minutes to execute a query in Hive then in Spark SQL it will take less than half a minute to execute the same query. Hive QL- Advantages of Spark SQL over HiveQLįaster Execution - Spark SQL is faster than Hive. Get FREE Access to Data Analytics Example Codes for Data Cleaning, Data Munging, and Data Visualization Spark SQL vs.

Spark SQL overcomes all the above limitations of Apache Hive for relational data processing. The problem is the users encrypt the whole /user/hive/warehouse directory and then when users try to drop a database in hive it will fail because it is a part of the encryption zone. Only soft deletes are supported in Hive meaning the complete encryption zone can be deleted or moved into trash. If the processing dies in the middle because of some error or breakdown of the hadoop cluster then Apache Hive cannot resume processing from the breakpoint.Įxplore SQL Database Projects to Add them to Your Data Engineer Resume.Ĭonsidering from a security aspect, Hive cannot drop encrypted databases. If you consider any interesting workflow pipeline it is likely to have long series of SQL statements, for instance, let’s consider a workflow pipeline with 2K lines of Apache Hive code having 50 SQL statements. Mapreduce is slower in nature and Hive uses mapreduce that lags in performance when it comes small and medium sized datasets of size less than 200 GB.Īnother major problem with Apache Hive for relational data processing was that it does not have job resume capability. In Apache Hive, SQL developers can write queries in a SQL way which are converted to MapReduce jobs. Spark SQL has been developed to overcome the limitations posed by Hive running on top of Apache Spark.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed